1. Introduction

In the field of numerical analysis, interpolation methods are frequently employed for function approximation, numerical differentiation, and numerical integration. A wide variety of interpolation techniques are commonly used, including interpolation formulas for non-uniform nodes

| [1] | Liszka T. An interpolation method for an irregular net of nodes, International Journal for Numerical Methods in Engineering. 1984, 20(9): 1599-1612.

https://doi.org/10.1002/nme.1620200905 |

[1]

, uniform node interpolation formulas

| [2] | Curtiss J. Interpolation in regularly distributed points, Transactions of the American Mathematical Society. 1935, 38(3): 458-473. https://doi.org/10.2307/1989807 |

[2]

, linear interpolation methods

, the Lagrange's interpolation formula, and spline interpolation

| [4] | Greville T N E. Numerical procedures for interpolation by spline functions, Journal of the Society for Industrial and Applied Mathematics. Series B: Numerical Analysis, 1964, 1(1): 53-68. |

[4]

. Most of these interpolation formulas impose strict requirements on the number of sampling points and necessitate that the intervals between points be regular. A possible approach for performing numerical differentiation and integration with irregular intervals is the Lagrange's interpolation formula.

Lagrange's interpolation formula is a classical interpolation method that constructs a polynomial to approximate the function values at a given set of data points. For a given set of samples at , Lagrange's interpolation formula is

(1)

Differentiating equation (1) with respect to once and twice gives the first-order and second-order derivatives:

(2)

Integrating equation (

1) with respect to

from

to

gives the definite integral:

(3)

Lagrange's interpolation formula can perform differentiation and integration when intervals are irregular, and it can accommodate an arbitrary number of sampling points. However, it is obvious that each process is very tedious and such an operation is seldom applied.

To address this issue, Wang

| [5] | Wang, R, Ly, B, Xie, W, Pandey, M. Lagrange Interpolation in Matrix Form for Numerical Differentiation and Integration, American Journal of Applied Mathematics. 2024, 12(3), 66-78. https://doi.org/10.11648/j.ajam.20241203.13 |

[5]

proposed the Lagrange interpolation in the matrix form using the Vandermonde matrix. Through practical examples, it was shown that this matrix formulation not only retains the adaptability of the original interpolation formula with respect to interval length and the number of sampling points, but also achieves higher computational efficiency and numerical accuracy in differentiation, integration, and extremum determination.

However, whether the interpolation formula takes the matrix form or the polynomial form, the Runge phenomenon

| [6] | Runge C. Über empirische Funktionen und die Interpolation zwischen äquidistanten Ordinaten, Zeitschrift für Mathematik und Physik. 1901, 46(224-243): 20. |

[6]

inevitably occurs when approximating certain types of functions on equidistant grids, rendering numerical results unreliable near the endpoints of the interpolation interval. The Runge phenomenon refers to the substantial oscillation and numerical instability exhibited by high-order polynomial interpolation functions near interval endpoints, especially when interpolating functions characterized by high-order derivatives or significant curvature. Furthermore, when equally spaced interpolation nodes are used, increasing the interpolation order exacerbates oscillations, resulting in significant interpolation errors.

The Runge phenomenon was first identified by the German mathematician Carl Runge

| [6] | Runge C. Über empirische Funktionen und die Interpolation zwischen äquidistanten Ordinaten, Zeitschrift für Mathematik und Physik. 1901, 46(224-243): 20. |

[6]

in 1901. To illustrate, consider the following function:

(4)

Select equally spaced sampling points over the interval :

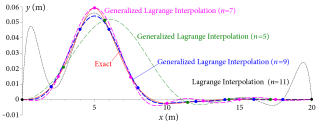

The results obtained using the classical Lagrange interpolation are shown in

Figure 1 for

5, 7, 9, and 11. It is clearly observed that there are large oscillations and divergence, especially close to the boundaries of the interval, in the results of the classical Lagrange interpolation. As

increases, the oscillations and divergence do not improve but exacerbate.

Figure 1. Illustration of the Runge phenomenon.

To resolve the issue of Runge phenomenon, some methods have been successively proposed over the past decades

| [7] | Boyd J P. Trouble with Gegenbauer reconstruction for defeating Gibbs’ phenomenon: Runge phenomenon in the diagonal limit of Gegenbauer polynomial approximations, Journal of Computational Physics. 2005, 204(1): 253-264.

https://doi.org/10.1016/j.jcp.2004.10.008 |

| [8] | Chen Y J, He H Y, Zhang S L. A new algebra interpolation polynomial without Runge phenomenon, Applied Mechanics and Materials. 2013, 303: 1085-1088.

https://doi.org/10.4028/www.scientific.net/AMM.303-306.1085 |

| [9] | Piret C. A radial basis function based frames strategy for bypassing the Runge phenomenon [J]. SIAM Journal on Scientific Computing, 2016, 38(4): A2262-A2282.

https://doi.org/10.1137/15M1040943 |

| [10] | Majidian H. Creating stable quadrature rules with preassigned points by interpolation, Calcolo. 2016, 53(2): 217-226.

https://doi.org/10.1007/s10092-015-0145-0 |

| [11] | She J, Tan Y. Research on Runge phenomenon, Computational and Mathematical Biophysics. 2019, 8(8): 1500-1510.

https://doi.org/10.12677/AAM.2019.88175 |

| [12] | Zhang R J, Liu X. Rational interpolation operator with finite Lebesgue constant, Calcolo. 2022, 59(1): 10.

https://doi.org/10.1007/s10092-021-00454-1 |

[7-12]

. The Tikhonov regularization method was proposed by Boyd

, in which the polynomial approximation was constructed by minimizing a weighted sum of the interpolation residual and a smoothness norm. Compared to conventional equidistant interpolation, the pointwise error was reduced by up to a factor of approximately 20,000. Subsequently, various single-interval polynomial interpolation approaches

| [14] | Gottlieb D, Shu C W. Resolution properties of the Fourier method for discontinuous waves, Computer methods in applied mechanics and engineering. 1994, 116(1-4): 27-37.

https://doi.org/10.1016/S0045-7825(94)80005-7 |

| [15] | Boyd J P. A comparison of numerical algorithms for Fourier extension of the first, second, and third kinds, Journal of Computational Physics. 2002, 178(1): 118-160.

https://doi.org/10.1006/jcph.2002.7023 |

| [16] | Gelb A. Parameter optimization and reduction of round off error for the Gegenbauer reconstruction method, Journal of Scientific Computing. 2004, 20(3): 433-459.

https://doi.org/10.1023/B:JOMP.0000025933.39334.17 |

| [17] | Jackiewicz Z. Determination of Optimal Parameters for the Chebyshev--Gegenbauer Reconstruction Method, SIAM Journal on Scientific Computing. 2004, 25(4): 1187-1198. https://doi.org/10.1137/S1064827503423597 |

| [18] | Jung J H, Shizgal B D. Generalization of the inverse polynomial reconstruction method in the resolution of the Gibbs phenomenon, Journal of computational and applied mathematics. 2004, 172(1): 131-151.

https://doi.org/10.1016/j.cam.2004.02.003 |

| [19] | Platte R B, Driscoll T A. Polynomials and potential theory for Gaussian radial basis function interpolation, SIAM Journal on Numerical Analysis. 2005, 43(2): 750-766.

https://doi.org/10.1137/040610143 |

[14-19]

, such as Tikhonov regularization, Fourier extension, and Gaussian radial basis functions methods, were comparatively analyzed

| [20] | Boyd J P, Ong J R. Exponentially-convergent strategies for defeating the Runge phenomenon for the approximation of non-periodic functions, part I: single-interval schemes, Comput. Phys. 2009, 5(2-4): 484-497. |

[20]

. These techniques typically control endpoint oscillations in high-order polynomial interpolations by incorporating regularization, improving node distribution, constructing over-determined systems, or utilizing smoother basis functions. Although numerical experiments demonstrated their high approximation accuracy, these methods still faced theoretical and efficiency challenges due to limitations such as low computational efficiency and numerical ill-conditioning.

Instead of relying on polynomial interpolation to overcome the Runge phenomenon, an immune genetic algorithm-based optimal parameter searching method was proposed by Lin and Sun

| [21] | Lin H, Sun L. Searching globally optimal parameter sequence for defeating Runge phenomenon by immunity genetic algorithm, Applied Mathematics and Computation. 2015, 264: 85-98. https://doi.org/10.1016/j.amc.2015.04.069 |

[21]

. This method searches for an optimal parameter sequence that minimizes an energy function, thereby constructing parametric curves that avoid Runge oscillations. However, genetic algorithms generally suffer from parameter sensitivity, uncertainty in stochastic convergence, high computational costs, and limited scalability, which restrict their applicability in practical engineering applications.

The objective of this study is to eliminate Runge oscillations by proposing a generalized Lagrange interpolation formula in the matrix form. Within this framework, suitable coordinate functions are selected according to the characteristics of the target function, enabling the interpolation formula to possess similar properties, which leads to enhanced computational versatility, accuracy and efficiency, and significantly improving convergence near the interval boundaries. The proposed method is readily applicable to numerical computations in engineering practice.

In Section 2, the generalized Lagrange interpolation is described in detail, numerical methods for even and odd target functions, and the use of classical normal modes in free vibration of beams as coordinate functions are provided. The proposed method effectively eliminates the Runge phenomenon and is equally applicable to numerical differentiation, integration, root-finding, and determining extrema. To validate the effectiveness of the proposed method, six numerical examples are presented in Section 3 to demonstrate the versatility, efficiency, and accuracy of the method. Some conclusions are drawn in Section 4.

2. Formulation

2.1. Generalized Lagrange Interpolation in the Matrix Form

Let be a single-valued smooth function of variable . If 1, 2, ..., is a set of basis (coordinate) functions suitable for , then can be expanded in terms of the basis functions as

In practice, only terms in the expansion are used, resulting in an approximation of function :

(6)

the coefficients , 1, 2, ..., n, can be estimated from a set of samples, which are taken at distinct points to be obtain the corresponding values , , ..., of the function. This set of samples leads to a system of linear algebraic equations

(7)

Solving for the unknown coefficients

, the expansion (

6) can be written in the matrix form as

(8)

Equation (

8) is the generalized Lagrange interpolation in the matrix in terms of the coordinate functions

. The derivatives of

can be easily obtained by differentiating equation (

8) with respect to

:

(10)

(11)

The definite integral of

between

and

can also be easily obtained by integrating equation (

8) from

to

:

where denotes the generalized Vandermonde matrix. Depending on the characteristics of function , suitable coordinate functions can be selected so that the generalized Lagrange interpolation is more efficient and more accurate. Some cases are discussed in the following subsections.

2.2. Classical Lagrange Interpolation in the Matrix Form

In the absence of any prescribed conditions, can be represented by a polynomial of degree , i.e., the coordinate functions ,

(12)

For a sample of size

, equation (

12) can be written in the matrix form as

(13)

Equation (

13) is the classical Lagrange interpolation in matrix form, which applies to an asymmetric set of data points subject only to the condition of belonging to

L2. A detailed study of Lagrange interpolation in the matrix form is presented by Wang et al.

| [5] | Wang, R, Ly, B, Xie, W, Pandey, M. Lagrange Interpolation in Matrix Form for Numerical Differentiation and Integration, American Journal of Applied Mathematics. 2024, 12(3), 66-78. https://doi.org/10.11648/j.ajam.20241203.13 |

[5]

.

2.3. Even Target Functions

When function is an even function, i.e., , polynomials of even powers can be employed as coordinate functions, i.e., , to yield

(15)

The corresponding first and second numerical derivatives, and the definite integral are given by

(17)

(18)

(19)

2.4. Odd Target Functions

If function is an odd function, i.e., , polynomials of odd powers can be employed as coordinate functions, i.e., . The generalized Lagrange interpolation and the corresponding derivatives and the definite integral are

(20)

(22)

(23)

(24)

2.5. Classical Normal Modes

When satisfies certain boundary conditions, such as the deflections of supported beams, the classical normal modes of a prismatic beam of length L can be employed as coordinate functions because they inherently satisfy the relevant boundary conditions:

1) Choose the free vibration modes of a simply supported beam to satisfy and .

2) Choose the free vibration modes of a clamped beam to satisfy and .

3) Choose the free vibration modes of a cantilever beam to satisfy and .

Additional boundary configurations can be handled analogously, and details of these classical normal modes are provided by Blevins

. An example using the free vibration modes of a clamped beam as coordinate functions is presented in Section 3.6.

3. Numerical Examples

In this section, six examples are presented to illustrate the versatility and superiority of the method of generalized Lagrange interpolation in the matrix form.

3.1. Example 1 - Even Functions

Consider an even function

as the target function. Because uniformly spaced sampling points offer high efficiency and accuracy, five sampling points with regular intervals are listed in

Table 1. The exact first-order derivatives of

at

0.1, 0.2, 0.3, 0.4, 0.5 are given in

Table 2, and the definite integral of the function over the interval [0, 0.5] is

These results are used to assess the efficiency and accuracy of the results obtained using the proposed method.

Table 1. Five sampling points with regular intervals.

| 0.000 | 0.125 | 0.250 | 0.375 | 0.500 |

| 1.00000 | 0.92388 | 0.70711 | 0.38268 | 0.00000 |

Table 2. Exact values of the first-order derivative.

| 0.1 | 0.2 | 0.3 | 0.4 | 0.5 |

| −0.97081 | −1.84658 | −2.54160 | −2.98783 | −3.14159 |

3.1.1. Using the Classical Lagrange Interpolation

By using the sampling points at

0, 0.125, 0.375, 0.5 and applying equation (

14), the corresponding Vandermonde matrix

and the vector

are

Differentiating equation (

13) with respect to

, the first-order derivative can be determined

The results of numerical differentiation using the classical Lagrange interpolation are compared with the exact values in

Table 3. The relative error ranges from -0.37% to 0.29%, and it increases as the evaluation point approaches the domain boundary. Integrating equation (

13) with

respect to , the definite integral is determined as

The error between the numerical integral and the exact value is −0.00084%.

Table 3. Numerical differentiation error of classical Lagrange interpolation.

| 0.1 | 0.2 | 0.3 | 0.4 | 0.5 |

Exact values | −0.97081 | −1.84658 | −2.54160 | −2.98783 | −3.14159 |

Numerical values | −0.97363 | −1.84589 | −2.54080 | −2.99122 | −3.13002 |

Error (%) | 0.2905 | −0.0374 | −0.0315 | 0.1135 | −0.3683 |

3.1.2. Using the Generalized Lagrange Interpolation

By using the sampling points at

= 0, 0.125, 0.25, 0.375, 0.5 and applying equation (

16), the corresponding Vandermonde matrix

and the vector

are

Using equation (

17), the first-order derivative can be determined, and the numerical results of generalized Lagrange interpolation are compared with the corresponding exact values in

Table 4. When the exact differentiation solution is provided to five decimal places, the proposed method produces results that match up to four to five decimal places. The definite integral is determined from equation (

19) as

with a relative error of −0.000008%.

Table 4. Numerical differentiation error of generalized Lagrange interpolation.

| 0.1 | 0.2 | 0.3 | 0.4 | 0.5 |

Exact values | −0.97081 | −1.84658 | −2.54160 | −2.98783 | −3.14159 |

Numerical values | −0.97081 | −1.84658 | −2.54160 | −2.98784 | −3.14156 |

Error (%) | 0 | 0 | 0 | | |

The values of relative error demonstrate that the generalized Lagrange interpolation delivers markedly higher accuracy for both differentiation and integration than the classical method.

3.2. Example 2 - Odd Functions

Consider the odd function

. Six sampling points with regular intervals are listed in

Table 5, and the exact first-order derivatives of

at

0.1, 0.3, 0.5, 0.7, 0.9 are given in

Table 6. The definite integral of the function over the interval [0,1] is

Table 5. Six sampling points with regular intervals.

| 0.0 | 0.2 | 0.4 | 0.6 | 0.8 | 1.0 |

| 1.00000 | 0.92388 | 0.70711 | 0.38268 | 0.00000 | 0.76159 |

Table 6. Exact values of the first-order derivative.

| 0.1 | 0.3 | 0.5 | 0.7 | 0.9 |

| 0.99007 | 0.91514 | 0.78645 | 0.63474 | 0.48692 |

3.2.1. Using the Classical Lagrange Interpolation

When the six sampling points at = 0, 0.2, 0.4, 0.6, 0.8, 1.0 are used, the corresponding Vandermonde matrix and the vector are

The numerical derivatives and integral are summarized in

Table 7; it is seen that the relative error ranges from -0.05% to 0.04%. Similar to the case of even functions, the accuracy of numerical results deteriorates near the domain boundaries. The numerical integral is given by

and the relative error between numerical integral and the exact value is −0.0022%.

Table 7. Numerical differentiation using the classical Lagrange interpolation.

| 0.1 | 0.3 | 0.5 | 0.7 | 0.9 |

Exact values | 0.99007 | 0.91514 | 0.78645 | 0.63474 | 0.48692 |

Numerical values | 0.99049 | 0.91507 | 0.78645 | 0.63478 | 0.48667 |

Error (%) | 0.0424 | −0.0077 | 0 | 0.0063 | −0.0513 |

3.2.2. Using the Generalized Lagrange Interpolation

When the generalized Lagrange interpolation for an odd target function is applied, the condition that is implicitly used. As a results, only five sampling points at = 0.2, 0.4, 0.6, 0.8, 1.0 are used, and the corresponding Vandermonde matrix and the vector are

Using equation (

22), the numerical derivatives are evaluated, and the results are presented in

Table 8, in which the relative errors range from -0.045% to 0.0032%. From equation (

24), the numerical integral is

and the relative error is -0.0006%. These results demonstrate a clear advantage in accuracy of the generalized Lagrange interpolation over the classical Lagrange interpolation method.

Table 8. Numerical differentiation using the generalized Lagrange interpolation.

| 0.1 | 0.3 | 0.5 | 0.7 | 0.9 |

Exact values | 0.99007 | 0.91514 | 0.78645 | 0.63474 | 0.48692 |

Numerical values | 0. 99007 | 0. 91514 | 0.78644 | 0.63476 | 0.48670 |

Error (%) | 0 | 0 | | 0.0032 | -0.0452 |

3.3. Example 3 - Root of a Function

Consider the even function . The exact root of within the interval [0, 1] is 0.824132312, which serves as a reference for evaluating the efficiency and accuracy of the results obtained using the proposed method.

The Taylor series expansion of the function is

It shows that if a three-point generalized Lagrange interpolation for even function is used, the square of the estimated root is governed by a quadratic equation, the root of which is readily calculated.

3.3.1. The First Iteration

Sample the function at three points with regular intervals as listed in

Table 9.

Table 9. Sampling points for the first iteration.

| 0.75 | 0.875 | 1.0 |

| 0.169188869 | -0.124628142 | -0.459697694 |

By using these three sampling points and applying equation (

16), the corresponding Vandermonde matrix

and the vector

are

The root of the function

is obtained by setting equation (

15) equal to zero as follows:

from which the root in the interval [0, 1] is 0.8241341. The relative error with respect to the exact root in the first iteration is 0.0002%.

3.3.2. The Second Iteration

To improve the accuracy, a new set of three sampling points is selected for this iteration as shown in

Table 10. The root found in the first iteration is used as the centre point, and the interval of the sampling point can be reduced significantly as the root in the first iteration is already quite accurate.

Table 10. Sampling points for the second iteration.

| 0.814 | 0.824 | 0.834 |

| 0.023999776 | 0.000315174 | -0.023637357 |

The corresponding Vandermonde matrix and the vector are given by

The root of the function is obtained by

from which the corresponding root is determined as 0.824132312, which is the same as the exact root.

It is demonstrated that the proposed method can achieve a high-accuracy numerical solution with only two iterations. By employing this approach, one can avoid the shortcomings of and a non-convergent loop that may occur in the Newton-Raphson formula. Moreover, at any stage of the iteration, three new appropriate samples can be chosen to fit.

3.4. Example 4 - Extrema of a Function

Consider the odd function . In the interval [0, 1.5], the maximum value 6.949224835 occurs at 1.088111560.

3.4.1. The First Iteration

Sample the function at three points with regular intervals as listed in

Table 11.

Table 11. Sampling points for the first iteration.

| 1.0 | 1.1 | 1.2 |

| 6.857302123 | 6.947313944 | 6.748239238 |

By using these three sampling points and applying equation (

21), the corresponding Vandermonde matrix

and the vector

are

The critical point of function is obtained by setting

equation (

22) equal to zero, as follows:

from which the root in the interval [0, 1.5] is 1.085043282, and the corresponding extremum of is given by

The relative error with respect to the exact extremum in the first iteration is −0.00179%.

3.4.2. The Second Iteration

To improve the accuracy, a new set of three sampling points is selected for this iteration as shown in

Table 12. As in Example 3, the centre point is chosen as the root in the first iteration, and the interval of sampling is significantly reduced.

Table 12. Sampling points for the second iteration.

| 1.080 | 1.085 | 1.090 |

| 6.948360731 | 6.949096775 | 6.949177322 |

The corresponding Vandermonde matrix and the vector are given by

The critical point of function is obtained by

from which the root is determined as 1.088112745, and the corresponding local extremum of is

which is the same as the exact extremum up to the eighth decimal place. Using equation (

23), the value of the second-order derivative at the critical point is

indicating that the local extremum 6.949224834 is a maximum.

3.5. Example 5 - Functions Exhibiting Runge Phenomenon

As discussed in Section 1, when interpolating certain functions in a finite interval using the classical Lagrange interpolation, there may be large oscillations and divergence close to the endpoints of the interpolation intervals, resulting in the so-called Runge phenomenon.

The Runge phenomenon can be effectively eliminated by applied the generalized Lagrange interpolation with appropriate coordinate functions. Without loss of generality, consider a function with end points and . By adding a linear function as

(25)

the endpoints of function can be transformed to and . By a change of variable , the domain of function becomes [0, 1].

Because function satisfies the boundary conditions and , while and , the shape functions of the free vibration of a simply supported beam of length can be employed as the coordinate functions, which satisfy the required boundary conditions and are given by

=1, 2, ....(26)

To illustrate, consider the function in equation (

4), or

with endpoints (-1, 1/6) and (1, 1/16). Using equation (

25), function

has endpoints (-1, 0) and (1, 0). Letting , the transformed function

(27)

has endpoints (0, 0) and (1, 0).

3.5.1. Classical Lagrange Interpolation

Sample the function

in equation (

25) using

equally spaced points within the interval

=0, 1, 2, ...,

. For

=11, the corresponding Vandermonde matrix

and the vector

are given by

For equation (

13), the expression for the classical Lagrange interpolation is

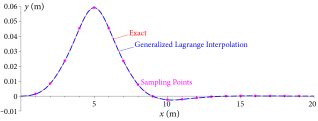

which is shown in

Figure 2(a) as black dashed line. It is seen that the classical Lagrange interpolation exhibits the typical Runge phenomenon near the endpoints of the interval.

3.5.2. Generalized Lagrange Interpolation

The shape functions

in equation (

26) are employed as coordinate functions. Because the conditions

are inherently satisfied, nine sampling points at

=0.1, 0.2, 0.3, ..., 0.9 are used. The corresponding Vandermonde matrix

and the vector

are

,

Using equation (

8), the expression for the generalized Lagrange interpolation is

which is shown in

Figure 2(b) as blue dashed line.

Figure 2. Comparison between numerical and exact results.

It is observed that the generalized Lagrange interpolation method effectively eliminates the Runge phenomenon and demonstrates significantly higher approximation accuracy across the entire domain as compared to the classical Lagrange interpolation. As the number of sampling points increases, the Runge phenomenon in the classical method becomes more pronounced, whereas the accuracy of the generalized Lagrange interpolation is further improved.

3.6. Example 6 - Deformation of a Beam on Elastic Foundation

Consider a beam

AB on elastic foundation, which is clamped at both ends, as shown in

Figure 3. The beam is subjected to a concentrated load

, the width of the beam is

, the moment of inertia is

, and the Young’s modulus is

. From Winkler’s assumption,

, where

is the modulus of the foundation.

Figure 3. Clamped-clamped beam on elastic foundation.

By using Dirac delta function, the concentrated load can be expressed as

The differential equation governing the deflection

is

| [23] | Xie W C. Differential equations for engineers. Cambridge university press, 2010. |

[23]

(28)

Because both ends of the beam are clamped, the boundary conditions are

Applying the method of Laplace transform, it can be shown that the solution of differential equation (

28) is

(29)

in which

From the boundary conditions and can be determined as

where

In order to induce significant deflection in the beam for illustrative purposes, the following parameters are adopted: Young’s modulus =100Gpa, the width, thickness, and height of the beam are =1m, =3m, =20m, the modulus of the foundation N/m3, and the concentrated load =22.5 GN. Thus, = 0.1 and =0.5946, in which the moment of inertia is calculated as .

3.6.1. Numerical Beam Deflection by the Classical Lagrange Interpolation

Eleven sample points are selected at

=0, 2, 4, 6, ..., 20. From the analytical deflection (

29), the corresponding Vandermonde matrix

and the vector

are given by

According to equation (

13), the expression for the beam deflection from Lagrange interpolation is

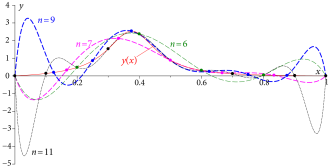

which is shown in

Figure 5 as black dashed line.

3.6.2. Numerical Beam Deflection by the Generalized Lagrange Interpolation

The shape functions of a supported beam are usually derived from the eigen-solutions of its free vibration. If the boundary characteristics of a supported beam, such as end displacements and rotations, are consistent with those of a more complex structural element, the shape functions of the beam can be employed as coordinate functions, so that the boundary conditions are satisfied intrinsically.

For a beam of length with clamped-clamped boundary conditions, the modal shape function of free vibration is given by

(30)

where , and denotes the n th positive root of the characteristic equation

The characteristic roots of the first nine modes are listed in

Table 13, and the corresponding beam shape-functions are shown in

Figure 4.

Table 13. Values of .

| 1 | 2 | 3 | 4 | 5 |

| 4.7300 | 7.8532 | 10.9956 | 14.1372 | 17.2788 |

| 6 | 7 | 8 | 9 | |

| 20.4204 | 23.5619 | 26.7035 | 29.8451 | |

Figure 4. Shape functions of the first nine beam modes.

Selecting nine sampling points at =2, 4, 6, 8, ..., 18 m, the corresponding Vandermonde matrix and the vector are

By applying equation (

8), the deflection

obtained using the generalized Lagrange interpolation is given by

which is shown in

Figure 5 as blue dashed line.

Figure 5. Comparison between numerical and exact results.

Figure 5 presents a comparison of beam deflections obtained using the classical Lagrange interpolation, the generalized Lagrange interpolation, and the exact theoretical solution. It can be observed that the classical Lagrange interpolation yields accurate results only near the mid-span of the beam. Close to the boundaries, the accuracy of the interpolation result deteriorates rapidly, leading to the Runge phenomenon with significant discrepancies from the exact solution.

In contrast, the generalized Lagrange interpolation demonstrates high accuracy across the entire beam, even with fewer sampling points. More accurate solutions are achieved for 7, with fitting performance further improving as increases. This is attributed to the fact that the boundary conditions are inherently embedded in the coordinate functions, which markedly enhances convergence near the boundaries and effectively eliminates the Runge phenomenon commonly observed with classical Lagrange polynomials.

The result of generalized Lagrange interpolation, when nineteen sampling points at

=1, 2, ..., 19 (m) are used, is shown in

Figure 6. It is seen that the result agrees with the exact result extremely well, with almost no visual difference.

It is noted that, for the classical Lagrange interpolation, two extra sampling points at =0, 20 (m) are needed, and the Runge phenomenon is very severe, especially at the left end. The overall performance of the classical Lagrange interpolation does not improve by using more sampling points due to the Runge phenomenon.

(a) (b)